Your AI Product Doesn't Need Onboarding — It Needs Trust

/ 8 min read

Table of Contents

While building Lucius AI, I spent ages on the onboarding flow.

Guided tours, feature walkthroughs, interactive demos, step-by-step tutorials… I built it all. Then users showed up, poked around for five minutes, and left.

My first instinct: onboarding isn’t good enough. Maybe the guidance isn’t clear? Too many steps?

Then it hit me. I was solving the wrong problem.

Users didn’t just “not know how to use it.” The deeper issue was — they didn’t trust it.

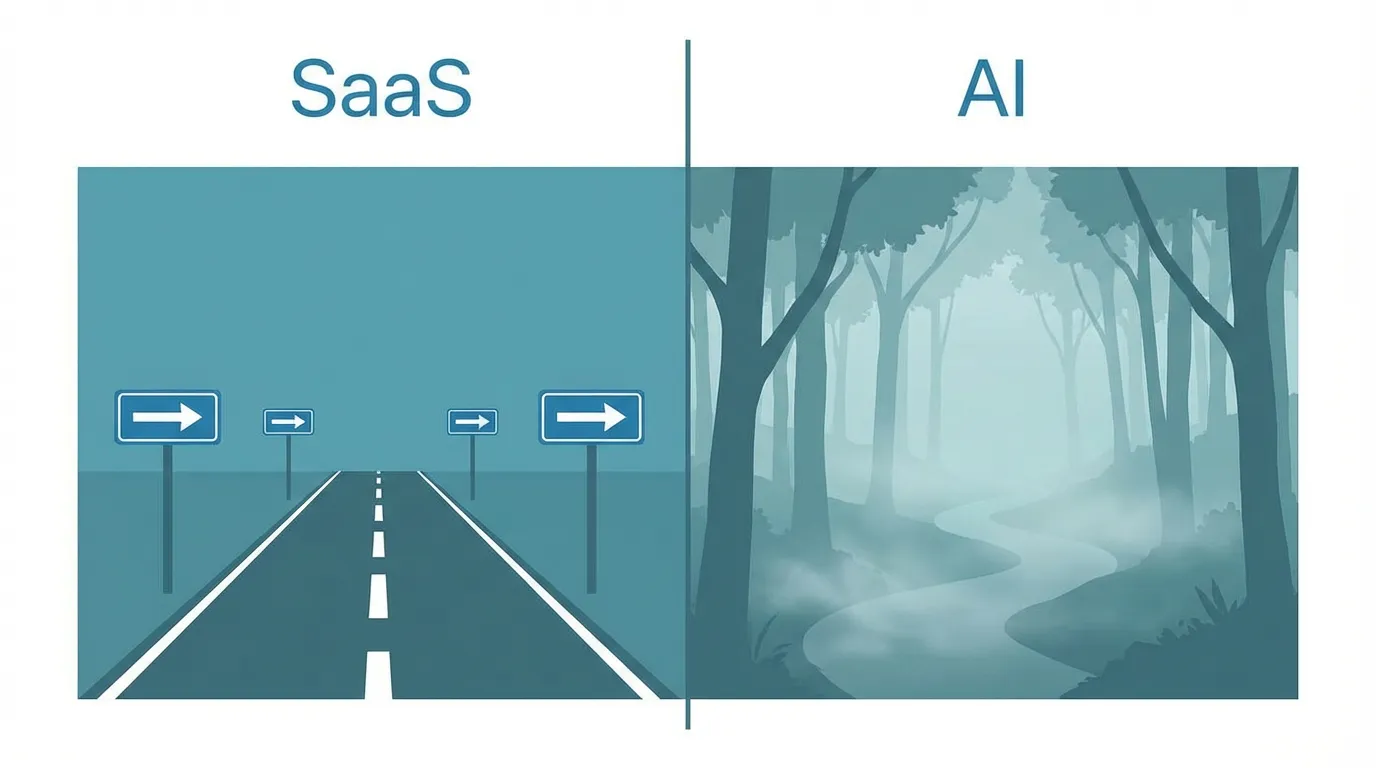

SaaS Onboarding Playbooks Don’t Work for AI

Traditional SaaS onboarding solves one problem: feature discovery.

Notion teaches you to create pages. Figma teaches you to draw frames. Slack teaches you to set up channels. Deterministic operations — click here, that happens. Every time.

So the SaaS onboarding playbook is mature: tooltips, checklists, empty states, progressive disclosure. These work because the underlying assumption holds — product behavior is predictable.

AI products break that assumption.

Same prompt, different times, different results. Users don’t know where AI’s capabilities end. Don’t know when it’ll make mistakes. Don’t know what those mistakes will cost.

This isn’t a “how to use it” problem. It’s a “can I trust it” problem.

Applying SaaS onboarding thinking to AI products is like teaching someone to ride a horse the way you’d teach them to drive a car — you can explain every control, but the horse has a mind of its own.

What the Data Says

The trust problem isn’t just my gut feeling. The data points the same way.

Pendo’s 2025 report shows average SaaS first-month retention at just 39%. But what’s more interesting is a16z’s finding in Retention Is All You Need: a massive chunk of AI product churn in the first three months comes from “AI tourists.” They drop $20 to try it for a month, think “that’s it?” and leave.

a16z’s late-2025 data shows ChatGPT’s DAU/MAU at 36%, M12 desktop retention at 50%. Gemini’s M12 was just 25%. But interestingly, by early 2026, Gemini’s reputation has clearly surpassed ChatGPT. Product capability shifts, and trust shifts with it — which proves trust isn’t one-and-done. It’s dynamic.

Even more telling is Sora. 12M downloads, D30 retention under 8%. The benchmark for solid consumer apps is 30%. OpenAI has the strongest brand trust in the space, but the gap between Sora’s actual experience and user expectations was massive — brand trust can’t paper over a product experience gap.

What Actually Works Isn’t Onboarding — It’s Progressive Trust

Looking at AI products that get this right, I noticed a shared pattern: they don’t rush users into handing over the steering wheel.

I call it progressive trust, in five levels:

Level 1 — Demonstrate: Let users see what AI can do. Zero risk. ChatGPT’s blank chat box is this level. Ask anything, get an answer. Wrong? Doesn’t matter — nothing happened.

Level 2 — Suggest: AI gives suggestions, user decides. Cursor’s Tab completion fits here. AI suggests the next line of code. Hit Tab to accept, or ignore it. You’re fully in control.

Level 3 — Confirm: AI prepares a complete plan, waits for your approval before executing. Devin’s model — it builds a full changeset, you review the diff and hit approve.

Level 4 — Delegate: AI executes autonomously, you audit after the fact. Intercom’s Fin lives here: auto-replies to customer questions, company reviews performance periodically.

Level 5 — Trust: AI runs fully autonomously, only reporting anomalies. Very few products dare go here.

This isn’t a strict linear path — some users skip levels, others stay at one level for a long time. The point isn’t to rush everyone to Level 5. It’s to make each level good enough that users choose to upgrade.

How Great Products Do It

Products with existing trust to borrow (Cursor forking VS Code, Notion embedding AI, Copilot inside GitHub) have it easier — just layer AI onto existing workflows.

More interesting are B2B AI products with no existing trust to borrow. They have to build from zero, facing enterprise customers with extremely low risk tolerance.

Harvey AI: Winning 42% of Top Clients in the Industry Least Likely to Trust AI

Legal might be the industry with the lowest AI tolerance. One hallucination could cause a legal incident. One bad citation could cost a firm a client.

Harvey captured 42% of the Am Law 100 (America’s largest 100 law firms) within three years, approaching $100M ARR at a $5 billion valuation. In an industry where “AI hallucination = career-ending incident,” how?

Step one: Trust by association. Harvey partnered with OpenAI to train specialized legal models, formed a strategic alliance with LexisNexis (the legal industry’s definitive data source), and partnered with PwC to enter the corporate legal market. Each partner wasn’t just a channel — it was a trust endorsement. “LexisNexis trusts Harvey” beats any demo.

Step two: Make verification part of the product. Harvey doesn’t just do RAG. It decomposes generated answers into individual factual claims, cross-references each against authoritative sources, and proactively flags uncertainty. Lawyers don’t see “what AI said” — they see “what AI said, what evidence supports it, and how confident it is.” This isn’t a feature. It’s trust infrastructure.

Step three: Start with the most conservative clients. Harvey’s co-founder said: “Law firms trust Azure, and we want law firms to trust us.” Their strategy wasn’t volume — it was landing the most prestigious, most conservative firms first. When Allen & Overy (a global top-tier firm) uses Harvey, the procurement decision for every other firm shifts from “should we trust AI” to “our competitors are already using it — what are we waiting for?”

Textbook B2B trust strategy: borrow authority → make verification visible → start with the hardest customers.

Devin: Let Users Be the Supervisor

Devin’s trust strategy is completely different. No borrowed authority — just radical transparency.

The repo setup process doubles as a capability demo — installing dependencies, running lint, running tests. Every step proves “I understand your codebase.” By the time setup completes, trust is already established.

More importantly: four fully visible panels — Shell, Browser, Editor, Planner. You always know what Devin is doing, why, and how far along it is. The Ground Truth called it “the best AI coding product onboarding experience.”

People don’t fear AI making mistakes. They fear not knowing what AI is doing. Full transparency doesn’t eliminate errors — it eliminates fear.

Intercom Fin: Progressive Deployment

Fin needs to build two layers of trust: first with enterprise customers (“this AI won’t wreck my customer relationships”), then with end users (“this bot can actually help me”).

At Fin 2’s launch: 51% auto-resolution rate, 99.9% accuracy, $0.99/resolution. Top customers now exceed 65%.

Its deployment strategy is a textbook example of progressive trust: start with simple issues (return/exchange queries), verify it works, then expand to complex scenarios (billing disputes). Enterprise customers don’t hand all customer service traffic to AI at once — they add it incrementally.

Three cases, three trust strategies: Harvey borrows authority, Devin uses transparency, Fin uses progressive deployment. Same underlying logic — prove you’re trustworthy first, then ask for more access.

”AI Tourists” Aren’t Your Fault

a16z made a point I deeply agree with: don’t optimize onboarding for AI tourists.

High churn in the first three months is largely structural. AI is too new, too many curious people. They spend $20 for a month of trying things out. That’s not your onboarding failing — they were never your users to begin with.

ChatGPT’s data reveals something even more interesting — the “smile curve.” Users who churned early come back when the product improves.

So the metric to watch isn’t M1 retention — it’s retention after M3. a16z recommends M12/M3 as the core metric for evaluating AI product quality. If that ratio is healthy, you’re keeping real users. Tourist churn shouldn’t be your primary anxiety.

AI Onboarding Never “Ends”

Traditional SaaS onboarding has an endpoint. User completes the checklist, enters normal usage, onboarding done.

AI products don’t have that endpoint.

Models upgrade. A model that couldn’t generate images last month suddenly can this month. Text-only conversations last week become computer-operating agents this week. Capability boundaries shift, user expectations shift with them.

Every capability change is a new trust test. Users need to recalibrate: What can it do now? Where are the limits? Does my previous trust still hold?

Notion’s approach: dedicated guidance for each new AI feature. Devin’s approach: continuously learning user preferences through its knowledge base, letting trust evolve alongside usage habits.

This isn’t an onboarding problem anymore. It’s an ongoing trust relationship between product and user. Like trust between people — not built once, and once is never enough.

So What Do You Do

If you’re building an AI product, three pieces of advice:

Stop optimizing “guided flows,” start designing “trust paths.” Don’t ask “where should users click first” — ask “when does the user first experience AI’s value?” That moment is the real aha moment.

Let users be supervisors, not passengers. Transparency isn’t nice-to-have — it’s the prerequisite for trust. Devin’s four panels, Cursor’s inline diff, Fin’s answer attribution — all saying the same thing: let users see what AI is doing.

Design for core users, not tourists. The first three months of churn data will make you anxious. Don’t be. Look at retention after M3. If core users stay, tourists leaving is fine.

Building Lucius AI taught me one thing: the trust window for AI products is extremely short. Not a 14-day trial. Not a 7-day onboarding flow. Maybe just the first few interactions.

In those interactions, you’re not teaching users how to use your product. You’re answering one question:

Why should I trust you?

Answer that poorly, and the best onboarding in the world won’t help. Answer it well, and onboarding barely matters.

Trust has no shortcuts.