Day One with ClawdBot, I Considered Firing My Entire Team

/ 8 min read

Table of Contents

Day one with ClawdBot, a dangerous thought flashed through my mind: I could fire my entire team.

Not out of anger. Not as a joke. It was a conclusion I seriously calculated.

Then one thing stopped me. Not cost, not legal concerns, not so-called “human touch.” It was something hard to define but impossible to ignore: Taste.

What Happened That Day

Let me explain what “day one” actually looked like.

It’s not about how much work AI helped me do—that story’s been told to death.

It’s that I suddenly realized an entire category of costs disappears when collaborating with AI.

I didn’t need alignment. No two-hour sessions explaining “why we’re doing this” or “the logic behind this decision.” AI doesn’t need convincing, doesn’t need to understand the company vision, doesn’t need to buy into my direction. It just needs to know the task.

I didn’t need to push. No chasing progress, no managing emotions, no choosing between “capable but poor attitude” and “great attitude but lacking skill.” AI has no emotions, no attitude problems, no “off days.”

I didn’t need to worry about fit. Every hire is a gamble—does this person’s skill set match the role? Will we discover they’re wrong in three months? What then? AI doesn’t have a “fit” problem. It only evolves. Not good enough today? Give feedback, and it’s better tomorrow.

That evening, I realized something:

I used to think the cost of management was necessary. Now I see it’s just the tax on human collaboration.

The Dangerous Thought

Lying in bed that night, the thought wasn’t “AI is really productive”—everyone knows that.

The thought was: how much of what I pay people is actually paying for the “human collaboration tax”?

Alignment costs. Communication costs. Emotional costs. Trial-and-error costs. The cost of replacing someone who doesn’t work out.

I started doing the math: an employee’s actual output might only account for 30% of my total cost. What’s the other 70%? Alignment meetings, repeated explanations, waiting for feedback, navigating the friction between “I think this is better” and “you think that is better.”

AI doesn’t need any of that.

You say “this is wrong,” and it won’t ask “why is it wrong?” or “are you sure?” or “I think it’s fine.” It just fixes it.

You give a direction, and it won’t say “I need to understand the context first” or “can we schedule a meeting?” It just does it.

You say “start over,” and there’s no emotional reaction. It just starts over.

The more I thought about it, the more alarmed I became: at this rate, give me three months and I could cut the team down to just me. Not because AI is cheaper—because AI reduces the collaboration tax to near zero.

The One Thing That Stopped Me

But then I realized something else—I couldn’t do it.

Not out of kindness, not out of responsibility, not out of fear of public opinion. It was because I discovered a fundamental blind spot in AI.

AI can produce “correct” content, but it can’t produce “right” content.

These two words seem synonymous, but the gap between them is a chasm.

“Correct” means no errors, follows standards, logically consistent. AI excels at this.

“Right” means—I can’t quite articulate it. But you know it when you see it. That feeling of “yes, that’s it.”

AI can give you ten options, each one “correct.” But it can’t pick the “right” one.

AI can mimic any style, maintain any tone. But it can’t create a style that’s yours.

This thing has a name: Taste. Judgment. Aesthetic sense. Or more plainly: the ability to know what’s “right.”

What Taste Actually Is

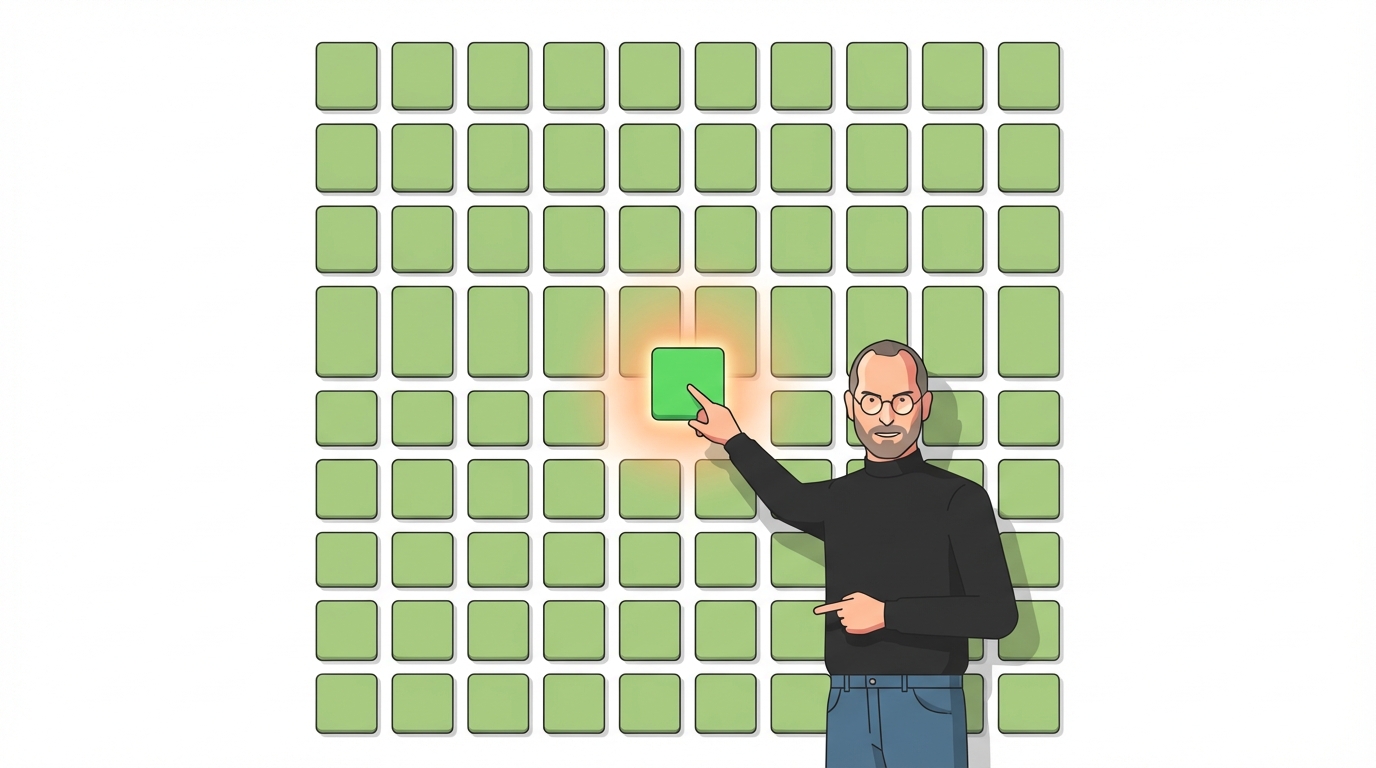

Taste is Steve Jobs looking at a thousand icon styles and saying “that green is wrong.”

The engineer says: green is green, the values are correct, user testing passed.

Jobs says: it’s wrong.

Then he changes one value, and the green becomes “right.”

Taste is Jony Ive insisting that the iPhone’s corner radius must be a precise value. Off by a hair and it’s “wrong.” No one can explain why that radius is right, but everyone can feel what it does.

Taste is looking at ten AI-generated options and knowing “none of these are right, it should be like this”—and then describing that version.

Taste isn’t knowing what’s good. There are too many good things; AI can list ten thousand.

Taste is knowing what’s “right.” There’s only one right answer, and there’s no formula for it.

But Taste Can Be “Fed” In

At this point, you might think this is an “AI has limits” article.

Not entirely.

I did something in ClawdBot: I added a refiner step to every skill.

What does that mean? Every time I say “this isn’t right,” “this doesn’t feel right,” “try a different direction,” it doesn’t just regenerate—it records my feedback, updates its understanding of the task, and iterates.

First piece of content: not satisfied. I said “too flat, needs to be sharper.”

Second piece: better, but still not quite. I said “opening is too slow, hit hard immediately.”

Third piece: right.

From then on, similar content came out with that flavor.

What does this mean? Taste can’t grow on its own in AI, but it can be “fed” in. Your judgment, your “that’s wrong,” your “it should be like this” can become AI’s memory.

But the prerequisite is—someone needs to do the feeding.

Someone needs to look at the first draft and say “wrong.” Someone needs to articulate what’s wrong. Someone needs to know what “right” is.

AI can iterate infinitely, but the direction of iteration needs a human. That person who sets the direction is the one with Taste.

AI’s Problem Is Being Too Smart

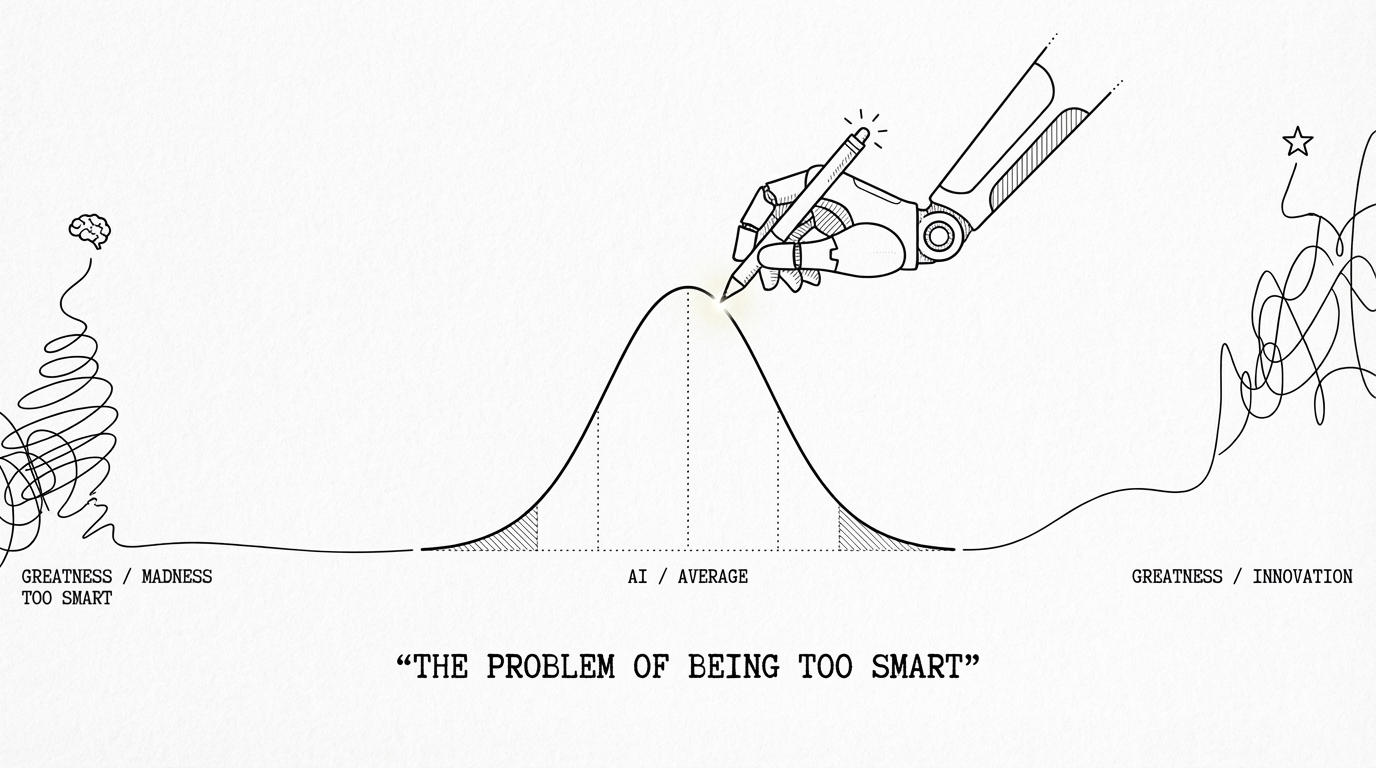

Many worry AI isn’t smart enough—that it makes mistakes, hallucinates.

I worry about the opposite: AI is too smart.

Smart enough to do anything, but not knowing what not to do.

Smart enough to produce content in any style, but not knowing which style your users need.

Smart enough to always give you a safe, error-free, median-quality answer.

And that’s the problem.

Great products are never averages.

Great products come from someone slamming the table and saying “I don’t care what the data says, I don’t care what user research says, it should be like this.”

AI can’t produce that “it should be like this.”

I Don’t Need People Who “Do Work”

So I’m not firing everyone after all.

But my expectations for the team have completely changed.

I no longer need people to “do work”—AI can replace most of that.

I no longer need people to “execute”—AI’s execution surpasses anyone’s. Tireless, uncomplaining, never takes a day off.

What I need are people with Taste to be gatekeepers.

To tell me “this option is wrong, though I can’t quite say why.”

To tell me “users will find this weird, even though the data looks fine.”

To tell me “this copy is logically sound, but it doesn’t read right.”

These people? AI can’t replace them.

Future Hiring Standards

This got me thinking about a bigger question: what will companies look like in the future?

My take: future companies may truly need very few people. Not “fewer”—dramatically fewer. Ten people’s work, done by three with AI. A hundred people’s work, done by twenty with AI.

But the bar for those three or twenty will become extraordinarily high.

Not “can do the work”—AI can do the work.

Not “has experience”—AI has learned from all of humanity’s experience, richer than any individual’s.

Not “highly efficient”—nothing is more efficient than AI.

The only criterion: do you have Taste?

Can you pick the “right” one from a thousand “correct” options?

Can you look at AI’s perfect answer and say “no, it should be like this”?

Can you make decisions that can’t be validated by data, can’t be explained by logic, but are simply “right”?

An Uncomfortable Conclusion

This is an uncomfortable conclusion.

Because Taste can’t be trained. You can’t take a course called “Taste Development” and graduate with taste.

Taste can’t be quantified. You can’t put “Taste: 8/10” on your resume, and no test can measure it.

Taste can’t even be asked about in interviews. Ask “what do you think makes good design?” and everyone can articulate a theory. But theory and Taste are different things.

Taste is grown. It comes from seeing enough good and bad things, making enough right and wrong decisions, until one day you suddenly “know.”

You can’t explain how you know, but you just do.

The scarcest ability in the AI era is precisely the hardest to define, cultivate, and verify.

Is That Person You?

So if you ask me whether AI will replace human jobs?

My answer: yes. Almost all “execution-layer” work will be replaced. Writing, design, data analysis, responding to messages, organizing documents—these jobs won’t disappear, but the number of people doing them will shrink dramatically.

But if you ask whether AI will replace everyone?

My answer: no. There will always need to be someone who says “this isn’t right.” Someone who makes those decisions AI can’t make—inexplicable, but simply “right.”

The question is—is that person you?

Are you honing your Taste?

Are you deliberately practicing the unpracticable ability of “knowing what’s right”?

Are you ready, in an era where AI can do all the work, to become that irreplaceable gatekeeper?

This is the most important thing I learned on day one with ClawdBot: the more powerful AI gets, the more valuable Taste becomes.

And for Taste, there are no shortcuts.