How a Pre-IPO Investment Platform Scaled Customer Support Without Scaling Headcount

/ 3 min read

Table of Contents

About Jarsy

Jarsy is a tokenized Pre-IPO equity investment platform. Everyday investors can get access to companies like SpaceX, Anthropic, and Stripe, starting from $10. The platform runs on Discord and Telegram, serving thousands of active users.

The problem

Jarsy sits at the intersection of fintech, crypto, and private equity. Support complexity is way beyond a typical SaaS product.

100+ questions a day. Tokenization mechanics, fee structures, IPO lock-up periods, proof of reserves. The small team couldn’t keep up.

Everyone was firefighting. Every hour spent on reactive support was an hour not spent on community work or user activation. The team knew what they should be doing. They just couldn’t.

Product moved faster than documentation. New features, pricing changes, policy updates — docs written a month ago were already wrong. The knowledge base was always behind.

Hiring wasn’t a real option either. The product was too complex for quick onboarding. By the time a new hire got up to speed, the product had changed again.

What Lucius did

Jarsy deployed Lucius to Discord and Telegram for day-to-day user support. One human team member collaborated through Slack.

Lucius took over frontline support, answering user questions around the clock on both platforms.

The human teammate didn’t need to watch every message. When Lucius hit something it wasn’t sure about, it would reply naturally to the user while flagging the question for human review. After the human confirmed or corrected, Lucius knew for next time.

Lucius doesn’t work off a static FAQ. It learns from docs, past conversations, live interactions, and admin input. When the product changes, its knowledge changes too.

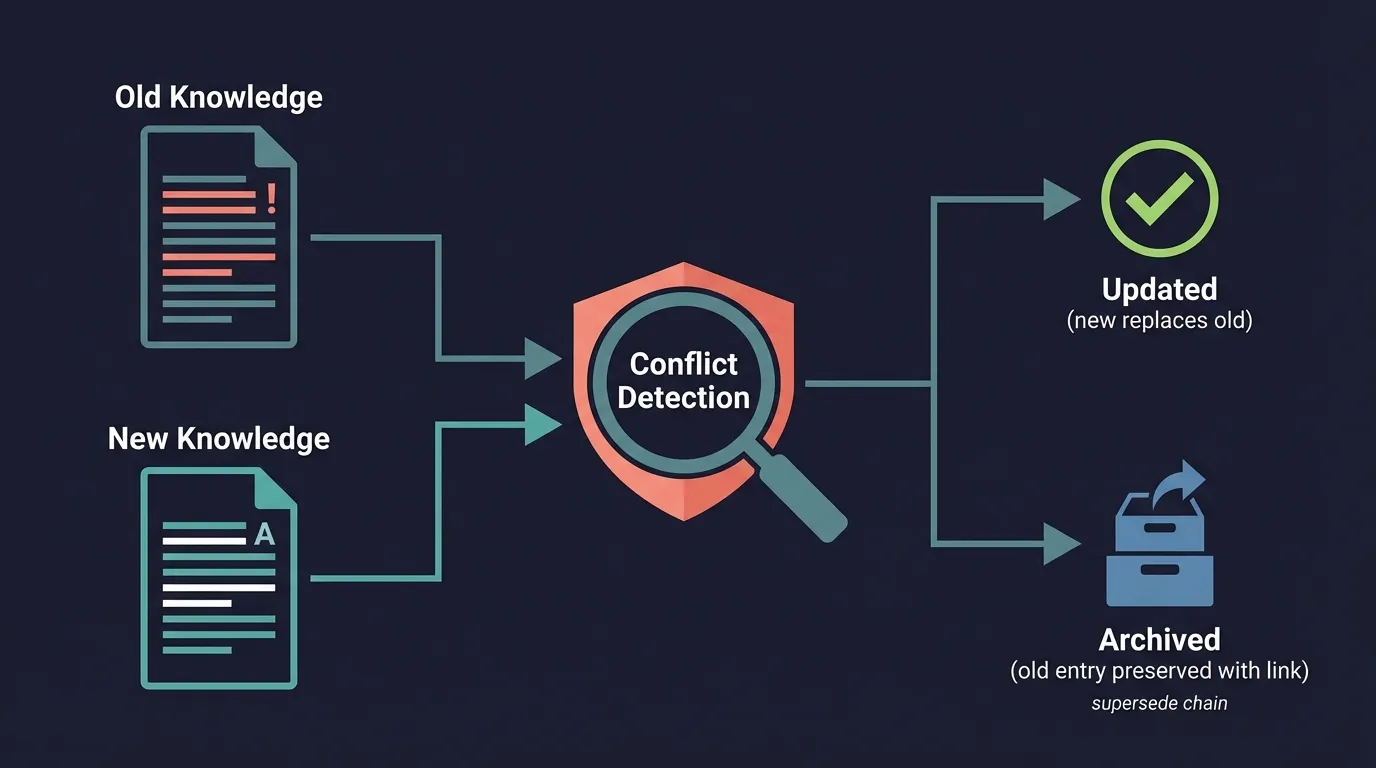

🔥 Technical highlight: knowledge conflict detection

The biggest risk in a knowledge base isn’t duplication — duplicates just waste storage. The real danger is when an old entry is wrong and nobody knows.

Every new piece of knowledge goes through four checks:

| Dimension | Question | Decision |

|---|---|---|

| Content overlap | Same topic as existing? | High overlap → skip |

| Information delta | What’s new? | Has new info → consider updating |

| Information conflict | Do they contradict? | Figure out which is right, replace the other |

| Context difference | Different scenarios? | Different contexts → keep both |

The third one — information conflict — is the hardest and most important.

How it works: embeddings for fast candidate retrieval, then an LLM for semantic comparison. When there are many candidates, the system batches them to keep context length manageable. Old knowledge isn’t deleted — it’s archived with a pointer to what replaced it, like Git history.

Here’s a real example: a user asked about the difference between SpaceX Live and Presale. Two support agents gave different answers. Both got extracted as knowledge entries. The system caught the conflict, determined the newer one was more accurate, and swapped them. After that, everyone got the right answer. No one had to do anything.

Results

Both platforms covered around the clock. Users get responses in minutes, no matter the time zone.

The team stopped firefighting. With routine questions handled, they could finally do community work, run events, and focus on activation — the stuff they’d been meaning to do for months.

Knowledge stopped going stale. When old info contradicted new info, Lucius flagged it. What used to be a painful manual process just happened on its own.

The math: one AI plus one human, doing the work of a full support team. Jarsy didn’t need to hire more people, and response quality actually got more consistent.

In their words

“Lucius keeps learning and proactively alerts us when older knowledge conflicts with new information. It helps us spot what’s outdated — something we struggled to do before.” — Jarsy Team